Jailtime for Retro Game Console Reviews? Italy’s Copyright Enforcement Sparks Debate

Italian YouTuber raided over handheld review, faces possible jail time.

Mozilla's research should come in handy for everyone.

The hype surrounding Artificial Intelligence (AI) has been at an all-time high, thanks to the many innovations made by the likes of Hugging Face, OpenAI, NVIDIA, Google, and others in the field.

But, as with most things hyped up, they often tend to fall to a base level where they don't really go to those highs anymore as adoption grows, and it becomes the norm to settle down or mature.

For AI, this seems to be marked by the peak that Generative AI has brought about.

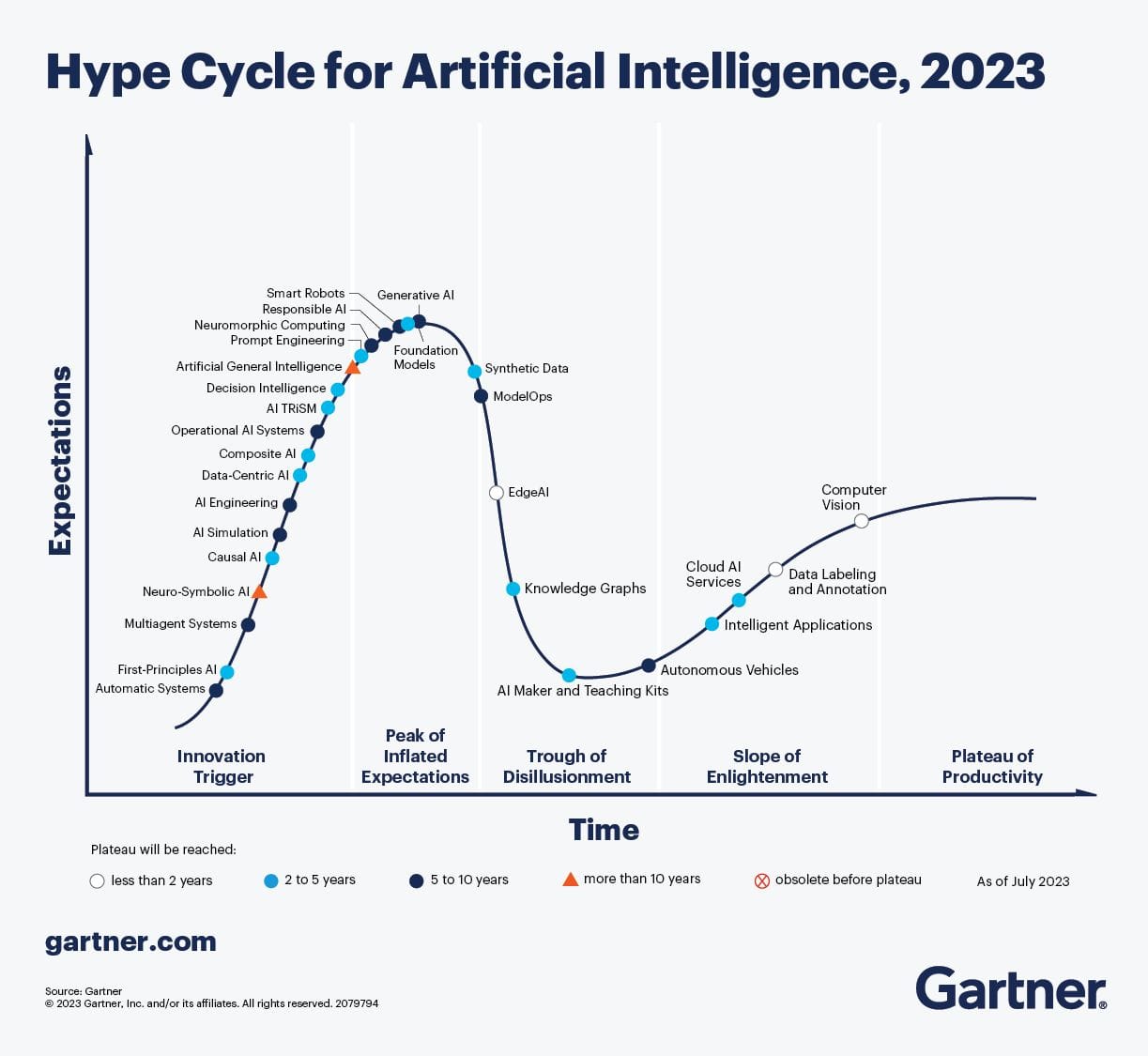

If you were to refer to the “Hype Cycle” published by Gartner back in 2023, you can see that we are fast approaching a time when all this AI hype will start to go down.

Just as we approach the inevitable downtrend of this hype cycle, Mozilla has published a paper that outlines the need for more openness in AI and how we could approach this challenge.

Mozilla teamed up with the Columbia Institute of Global Politics to bring together over 40 scholars from different organizations, such as Computer Says Maybe, Hugging Face, Meta, IBM, EleutherAI, GitHub, and a few others.

They have been working on how openness can positively impact AI across the globe, and one of their major breakthroughs has been a paper that details how organizations can tackle this.

Mozilla says that:

The paper surveys existing approaches to defining openness in AI models and systems, and then proposes a descriptive framework to understand how each component of the foundation model stack contributes to openness.

In the paper, they have listed many benefits as to why we should be pursuing AI openness. Some key highlights include:

Of course, they do mention that this list is not comprehensive, but you get the general idea of it, right? They also believe that there is a need for a “multidimensional approach” to AI openness.

So, Mozilla, and the scholars seem to have done thorough research regarding how open-source should be handled when it comes to AI.

If you are interested in diving deeper, you can go through the paper that they published, which includes numerous important details.

💬 What are your thoughts on this? Should there be more openness in AI?

Stay updated with relevant Linux news, discover new open source apps, follow distro releases and read opinions